OpenVINO部署Mask-RCNN实例分割网络

openlab_4276841a

更新于 4年前

openlab_4276841a

更新于 4年前

模型介绍

OpenVINO支持Mask-RCNN与yolact两种实例分割模型的部署,其中Mask-RCNN系列的实例分割网络是OpenVINO官方自带的,直接下载即可,yolact是来自第三方的公开模型库。

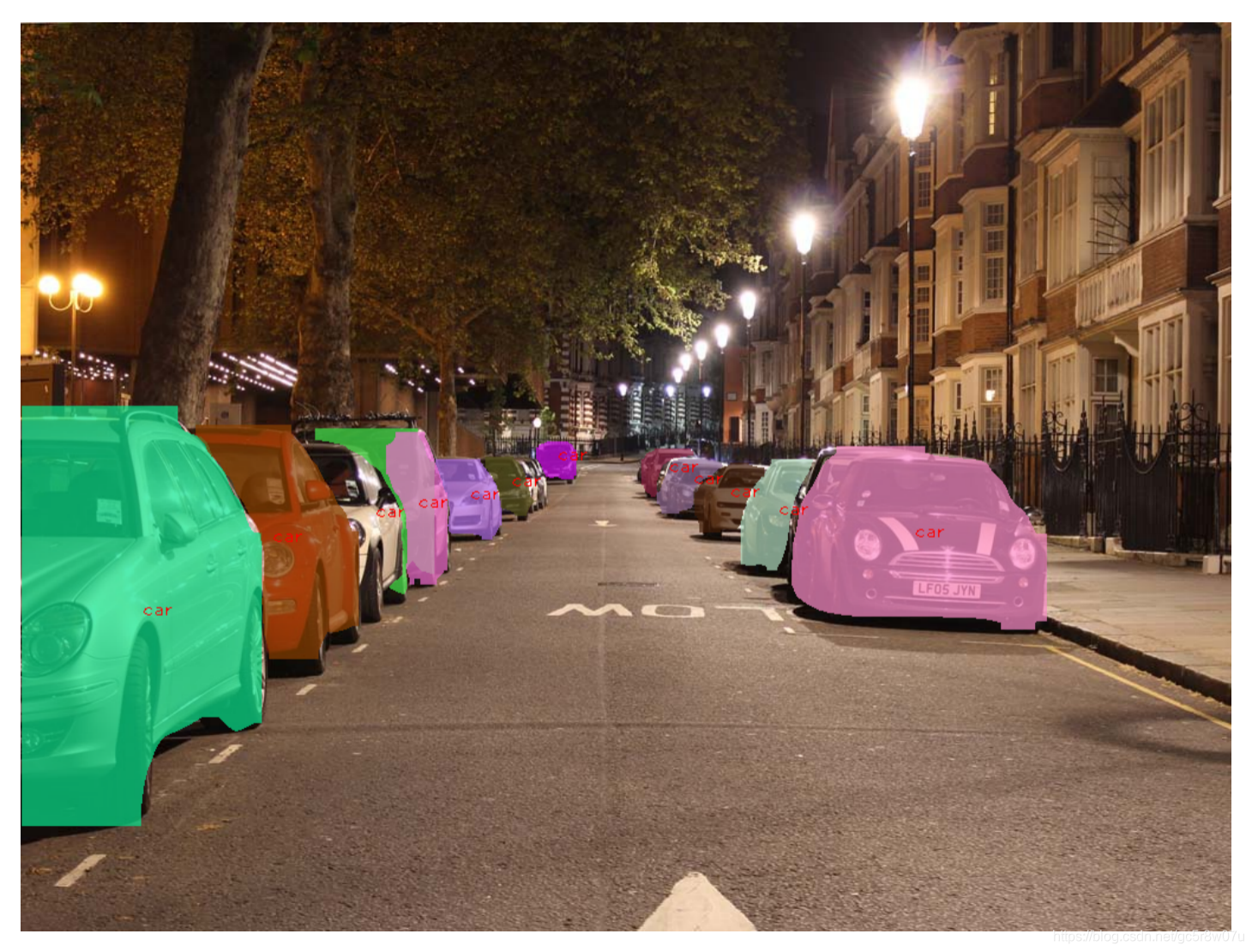

这里以instance-segmentation-security-0050模型为例说明,该模型基于COCO数据集训练,支持80个类别的实例分割,加上背景为81个类别。

OpenVINO支持部署Faster-RCNN与Mask-RCNN网络时候输入的解析都是基于两个输入层,它们分别是:

im_data : NCHW=[1x3x480x480]

im_info: 1x3 三个值分别是H、W、Scale=1.0

输出有四个,名称与输出格式及解释如下:

name: classes, shape: [100, ] 预测的100个类别可能性,值在[0~1]之间

name: scores: shape: [100, ] 预测的100个Box可能性,值在[0~1]之间

name: boxes, shape: [100, 4] 预测的100个Box坐标,左上角与右下角,基于输入的480x480

name: raw_masks, shape: [100, 81, 28, 28] Box ROI区域的实例分割输出,81表示类别(包含背景),28x28表示ROI大小。

上面都是官方文档给我的关于模型的相关信息,但是我发现该模型的实际推理输raw_masks输出格式大小为:100x81x14x14,这个算文档没更新吗?

代码演示:

这边的代码输出层跟输入层都不止一个,所以为了简化,我用了两个for循环设置了输入与输出数据精度,然后直接通过hardcode来获取推理之后各个输出层对应的数据部分,首先获取类别,根据类别ID与Box的索引,直接获取实例分割mask,然后随机生成颜色,基于mask实现与原图BOX ROI的叠加,产生了实例分割之后的效果输出。完整的演示代码:#include <inference_engine.hpp>

#include <opencv2/opencv.hpp>

#include <fstream>

using namespace InferenceEngine;

void read_coco_labels(std::vector<std::string> &label******r> std::string label_file = "D:/project***odels/coco_labels.txt";

std::ifstream fp(label_file);

if (!fp.is_open())

{

printf("could not open file...\n");

exit(-1);

}

std::string name;

while (!fp.eof())

{

std::getline(fp, name);

if (name.length())

labels.push_back(name);

}

fp.close();

}

template <typename T>

void matU8ToBlob(const cv::Mat& orig_image, InferenceEngine::Blob::Ptr& blob, int batchIndex = 0) {

InferenceEngine::SizeVector blobSize = blob->getTensorDesc().getDim******r> const size_t width = blobSize[3];

const size_t height = blobSize[2];

const size_t channel*****lobSize[1];

InferenceEngine::MemoryBlob::Ptr mblob = InferenceEngine::as<InferenceEngine::MemoryBlob>(blob);

if (!mblob) {

THROW_IE_EXCEPTION << "We expect blob to be inherited from MemoryBlob in matU8ToBlob, "

<< "but by fact we were not able to cast inputBlob to MemoryBlob";

}

// locked memory holder should be alive all time while access to it***uffer happen***r> auto mblobHolder = mblob->wmap();

T *blob_data = mblobHolder.as<T *>();

cv::Mat resized_image(orig_image);

if (static_cast<int>(width) != orig_image.size().width ||

static_cast<int>(height) != orig_image.size().height) {

cv::resize(orig_image, resized_image, cv::Size(width, height));

}

int batchOffset = batchIndex * width * height * channel****r>

for (size_t c = 0; c < channels; c++) {

for (size_t h = 0; h < height; h++) {

for (size_t w = 0; w < width; w++) {

blob_data[batchOffset + c * width * height + h * width + w] =

resized_image.at<cv::Vec3b>(h, w)[c];

}

}

}

}

int main(int argc, char** argv) {

std::string xml = "D:/project***odels/instance-segmentation-security-0050/FP32/instance-segmentation-security-0050.xml";

std::string bin = "D:/project***odels/instance-segmentation-security-0050/FP32/instance-segmentation-security-0050.bin";

InferenceEngine::Core ie;

std::vector<std::string> coco_label****r> read_coco_labels(coco_label*****r> cv::RNG rng(12345);

cv::Mat src = cv::imread("D:/images/sport-girls.png");

cv::namedWindow("input", cv::WINDOW_AUTOSIZE);

int im_h = src.row****r> int im_w = src.col****r>

InferenceEngine::CNNNetwork network = ie.ReadNetwork(xml, bin);

InferenceEngine::InputsDataMap inputs = network.getInputsInfo();

InferenceEngine::OutputsDataMap outputs = network.getOutputsInfo();

std::string image_input_name = "";

std::string image_info_name = "";

int in_index = 0;

for (auto item : input******r> if (in_index == 0) {

image_input_name = item.first;

auto input_data = item.second;

input_data->setPrecision(Precision::U8);

input_data->setLayout(Layout::NCHW);

}

else {

image_info_name = item.first;

auto input_data = item.second;

input_data->setPrecision(Precision::FP32);

}

in_index++;

}

for (auto item : output******r> std::string output_name = item.first;

auto output_data = item.second;

output_data->setPrecision(Precision::FP32);

std::cout << "output name: " << output_name << std::endl;

}

auto executable_network = ie.LoadNetwork(network, "CPU");

auto infer_request = executable_network.CreateInferRequest();

auto input = infer_request.GetBlob(image_input_name);

matU8ToBlob<uchar>(src, input);

auto input2 = infer_request.GetBlob(image_info_name);

auto imInfoDim = inputs.find(image_info_name)->second->getTensorDesc().getDims()[1];

InferenceEngine::MemoryBlob::Ptr minput2 = InferenceEngine::as<InferenceEngine::MemoryBlob>(input2);

auto minput2Holder = minput2->wmap();

float *p = minput2Holder.as<InferenceEngine::PrecisionTrait<InferenceEngine::Precision::FP32>::value_type *>();

p[0] = static_cast<float>(inputs[image_input_name]->getTensorDesc().getDims()[2]);

p[1] = static_cast<float>(inputs[image_input_name]->getTensorDesc().getDims()[3]);

p[2] = 1.0f;

infer_request.Infer();

float w_rate = static_cast<float>(im_w) / 480.0;

float h_rate = static_cast<float>(im_h) / 480.0;

auto scores = infer_request.GetBlob("score******r> auto boxes = infer_request.GetBlob("boxe******r> auto clazzes = infer_request.GetBlob("classe******r> auto raw_masks = infer_request.GetBlob("raw_mask******r> const float* score_data = static_cast<PrecisionTrait<Precision::FP32>::value_type*>(scores->buffer());

const float* boxes_data = static_cast<PrecisionTrait<Precision::FP32>::value_type*>(boxes->buffer());

const float* clazzes_data = static_cast<PrecisionTrait<Precision::FP32>::value_type*>(clazzes->buffer());

const auto raw_masks_data = static_cast<PrecisionTrait<Precision::FP32>::value_type*>(raw_masks->buffer());

const SizeVector scores_outputDims = scores->getTensorDesc().getDim******r> const SizeVector boxes_outputDim*****oxes->getTensorDesc().getDim******r> const SizeVector mask_outputDims = raw_masks->getTensorDesc().getDim******r> const int max_count = scores_outputDims[0];

const int object_size = boxes_outputDims[1];

printf("mask NCHW=[%d, %d, %d, %d]\n", mask_outputDims[0], mask_outputDims[1], mask_outputDims[2], mask_outputDims[3]);

int mask_h = mask_outputDims[2];

int mask_w = mask_outputDims[3];

size_t box_stride = mask_h * mask_w * mask_outputDims[1];

for (int n = 0; n < max_count; n++) {

float confidence = score_data[n];

float xmin = boxes_data[n*object_size] * w_rate;

float ymin = boxes_data[n*object_size + 1] * h_rate;

float xmax = boxes_data[n*object_size + 2] * w_rate;

float ymax = boxes_data[n*object_size + 3] * h_rate;

if (confidence > 0.5) {

cv::Scalar color(rng.uniform(0, 255), rng.uniform(0, 255), rng.uniform(0, 255));

cv::Rect box;

float x1 = std::min(std::max(0.0f, xmin), static_cast<float>(im_w));

float y1 = std::min(std::max(0.0f,ymin), static_cast<float>(im_h));

float x2 = std::min(std::max(0.0f, xmax), static_cast<float>(im_w));

float y2 = std::min(std::max(0.0f, ymax), static_cast<float>(im_h));

box.x = static_cast<int>(x1);

box.y = static_cast<int>(y1);

box.width = static_cast<int>(x2 - x1);

box.height = static_cast<int>(y2 - y1);

int label = static_cast<int>(clazzes_data[n]);

std::cout <<"confidence: "<< confidence<<" class name: "<< coco_labels[label] << std::endl;

// 解析mask

float* mask_arr = raw_masks_data + box_stride * n + mask_h * mask_w * label;

cv::Mat mask_mat(mask_h, mask_w, CV_32FC1, mask_arr);

cv::Mat roi_img = src(box);

cv::Mat resized_mask_mat(box.height, box.width, CV_32FC1);

cv::resize(mask_mat, resized_mask_mat, cv::Size(box.width, box.height));

cv::Mat uchar_resized_mask(box.height, box.width, CV_8UC3,color);

roi_img.copyTo(uchar_resized_mask, resized_mask_mat <= 0.5);

cv::addWeighted(uchar_resized_mask, 0.7, roi_img, 0.3, 0.0f, roi_img);

cv::putText(src, coco_labels[label].c_str(), box.tl()+(box.br()-box.tl())/2, cv::FONT_HERSHEY_PLAIN, 1.0, cv::Scalar(0, 0, 255), 1, 8);

}

}

cv::imshow("input", src);

cv::imwrite("D:/sport-girls.png", src);

cv::waitKey(0);

return 0;

}最终程序测试结果:

0个评论